About This Project

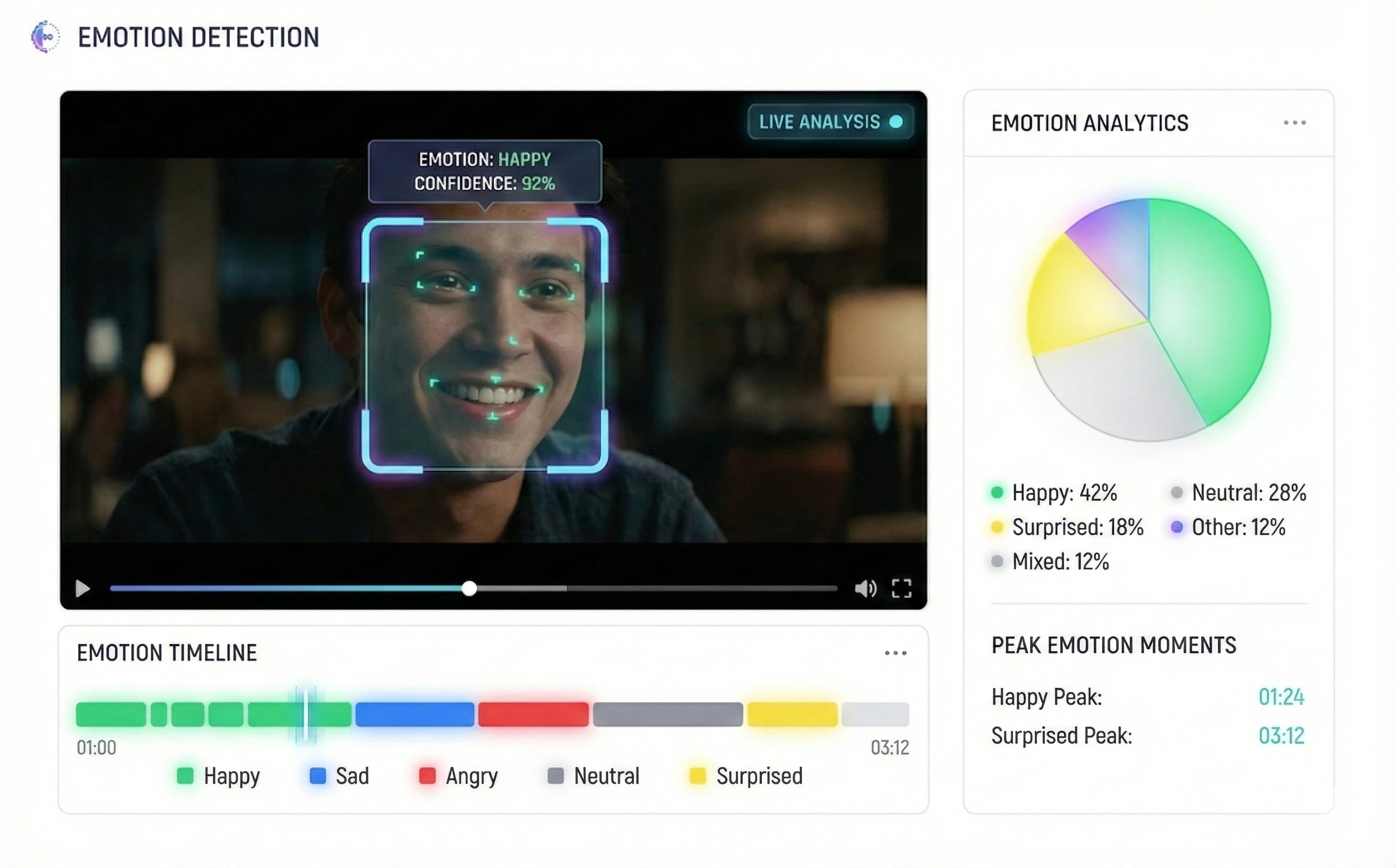

A real-time emotion recognition system built for major entertainment studios including Marvel and Big Brother that analyzes facial expressions during video playback. Registered users watched trailers while WebRTC captured facial expressions in real-time. The system processed recordings frame-by-frame using deep learning models, analyzing 6 emotion types and mapping them to specific moments, providing unprecedented insights into audience emotional responses. Processed 100,000+ sessions with 98% accuracy across diverse demographics.

Technologies Used

Key Features

Challenges & Solutions

Challenge 1: Real-time Processing at 30 FPS

Processing video frames and detecting emotions in real-time for multiple concurrent users required efficient GPU-accelerated models. We implemented TensorFlow models optimized with quantization and pruning, achieving 30FPS per user stream using GPU compute clusters while maintaining <100ms latency.

Challenge 2: High Accuracy Across Diverse Demographics

Achieving 98% accuracy across different lighting conditions, camera angles, face sizes, and diverse demographics required extensive training data. We fine-tuned pre-trained models on curated datasets representing entertainment audiences and implemented fallback detection strategies.

Challenge 3: Privacy and Compliance

Capturing and processing facial biometric data required strict privacy measures and compliance with GDPR/CCPA. We implemented local face processing where possible, end-to-end encryption, automatic data deletion after processing, and obtained explicit user consent with detailed privacy controls.

Challenge 4: Scaling to Enterprise Load

Scaling from single-user to handling 100,000+ concurrent sessions required distributed architecture. We built microservices for face detection, emotion classification, and result aggregation, deployed on Kubernetes, and implemented intelligent load balancing with session affinity.

Architecture & Design

The system uses a distributed microservices architecture. WebRTC enables peer-to-peer encrypted video transmission. Face detection microservice identifies faces using OpenCV. Emotion classification service uses TensorFlow models optimized with CUDA for GPU acceleration. Redis Streams process frame results in real-time. PostgreSQL stores session data and emotion timelines. Node.js APIs handle session management and analytics queries. Kubernetes orchestrates scaling across multiple nodes based on load. The entire pipeline is optimized for sub-100ms latency per frame.

Results & Impact Metrics

98% Emotion Detection Accuracy

Achieved 98% accuracy across diverse demographics, lighting, and camera angles

100,000+ Sessions Processed

Successfully processed over 100,000 emotion detection sessions with enterprise reliability

30 FPS Real-time Processing

Frame-by-frame emotion detection at video playback speed for natural user experience

100% Privacy Compliant

Full GDPR/CCPA compliance with automatic data deletion and end-to-end encryption

Key Learnings & Insights

This is a proprietary project developed for a product-based company. Code and live demos are not publicly available due to company confidentiality policies.

Interested in Similar Projects?

Let's discuss how we can work together to bring your ideas to life.

Get in Touch